Abstract:

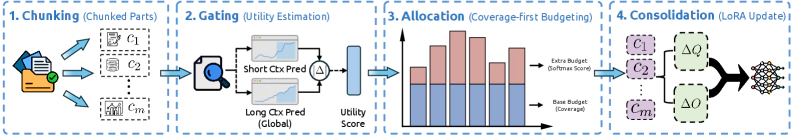

Long contexts challenge transformers as attention scores dilute across thousands of tokens and critical information is often lost in the middle. We reframe test-time adaptation as a budget-constrained memory consolidation problem and propose Gdwm (Gated Differentiable Working Memory), which introduces a write controller that estimates Contextual Utility, an information-theoretic measure of long-range contextual dependence, to allocate gradient steps efficiently. Experiments on ZeroSCROLLS and LongBench v2 show Gdwm achieves comparable or superior performance with 4x fewer gradient steps than uniform baselines, establishing a new efficiency-performance Pareto frontier for test-time adaptation.